|

dataset_path = dataset_path def _len_ ( self ): return math. annotation_lines = annotation_lines self. Sequence ): def _init_ ( self, annotation_lines, input_shape, batch_size, num_classes, train, dataset_path ): self.

Because the model has a fixed input shape, the resize operation is performed in the process_data method during the training process, data enhancement can also be added, and a simple flip is used here.Ĭlass UnetDataset ( tf. Return a set of batch_size data through the _getitem_ method, which includes the original image (images) and the label image (targets). readlines () train_batches = UnetDataset ( train_lines, INPUT_SHAPE, BATCH_SIZE, NUM_CLASSES, True, dataset_path ) val_batches = UnetDataset ( val_lines, INPUT_SHAPE, BATCH_SIZE, NUM_CLASSES, False, dataset_path ) STEPS_PER_EPOCH = len ( train_lines ) // BATCH_SIZE VALIDATION_STEPS = len ( val_lines ) // BATCH_SIZE // VAL_SUBSPLITS

join ( dataset_path, "ImageSets/Segmentation/val.txt" ), "r", encoding = "utf8" ) as f : val_lines = f. join ( dataset_path, "ImageSets/Segmentation/train.txt" ), "r", encoding = "utf8" ) as f : train_lines = f. Then, it is constructed as a tf. object through the UnetDataset class, which is convenient for direct training through model.fit later.ĭataset_path = 'datasets/train_voc' # read dataset txt files The dataset is divided into the training set and validation set, and files are read from ImageSets/Segmentation/train.txt and ImageSets/Segmentation/val.txt respectively. Open unet.ipynb and select the Python interpreter as unet to start training. The generated datasets/train_voc is the dataset used for training. │ │ └── val.txt # list of validation set image names If you have any questions or want to chat with me, feel free to contact me via EMAIL or social media.│ │ ├── train.txt # List of training set image names Plt.show() Figure 5: Prediction example Conclusion Plt.imshow(cv2.cvtColor(v.get_image(), cv2.COLOR_BGR2RGB))

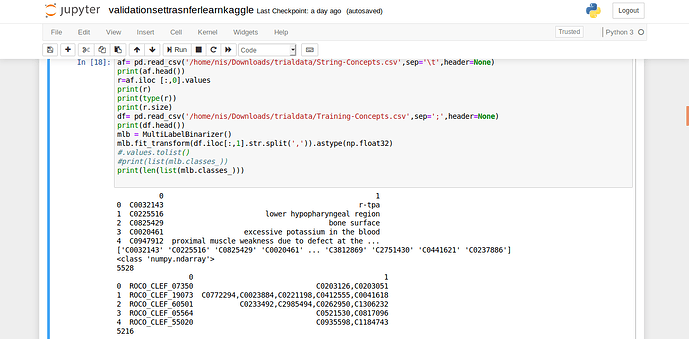

V = v.draw_instance_predictions(outputs.to("cpu")) Instance_mode=ColorMode.IMAGE_BW # remove the colors of unsegmented pixels from import ColorModeĬfg.MODEL.WEIGHTS = os.path.join(cfg.OUTPUT_DIR, "model_final.pth")Ĭfg.MODEL.ROI_HEADS.SCORE_THRESH_TEST = 0.5Ĭfg.DATASETS.TEST = ("microcontroller_test", )ĭataset_dicts = get_microcontroller_dicts('Microcontroller Segmentation/test')įor d in random.sample(dataset_dicts, 3): This file can then be used to load the model and make predictions.įor inference, the DefaultPredictor class will be used instead of the DefaultTrainer. ain() Figure 4: Training Using the model for inferenceĪfter training, the model automatically gets saved into a pth file. Os.makedirs(cfg.OUTPUT_DIR, exist_ok=True) from detectron2.engine import DefaultTrainerĬfg.merge_from_file(model_zoo.get_config_file("COCO-InstanceSegmentation/mask_rcnn_R_50_FPN_3x.yaml"))Ĭfg.DATASETS.TRAIN = ("microcontroller_train",)Ĭfg.MODEL.WEIGHTS = model_zoo.get_checkpoint_url("COCO-InstanceSegmentation/mask_rcnn_R_50_FPN_3x.yaml") The only difference is that you'll need to use an instance segmentation model instead of an object detection model. Training the model works just the same as training an object detection model. The stuff segmentation format is identical and fully compatible with the object detection format. Instance segmentation falls under type three – stuff segmentation. Convert your data-set to COCO-formatĬOCO has five annotation types: object detection, keypoint detection, stuff segmentation, panoptic segmentation, and image captioning. Now that you have the labels, you could get started coding, but I decided to also show you how to convert your data-set to COCO format, which makes your life a lot easier. Doing this will allow you to get a reasonable estimate of how good your model really is later on.

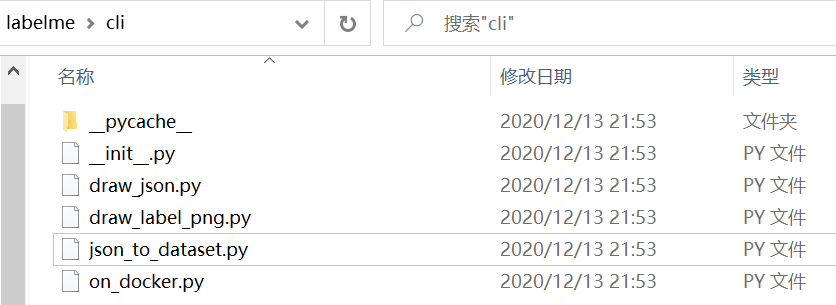

Figure 3: Labeling imagesĪfter you're done labeling the images, I'd recommend splitting the data into two folders – a training and a testing folder. Now you can click on "Open Dir", select the folder with the images inside, and start labeling your images. Labelme can be installed using pip: pip install labelmeĪfter installing Labelme, you can start it by typing labelme inside the command line. I chose labelme because of its simplicity to both install and use. For Image Segmentation / Instance Segmentation, there are multiple great annotation tools available, including VGG Image Annotation Tool, labelme, and PixelAnnotationTool. To label the data, you will need to use a labeling software.įor object detection, we used LabelImg, an excellent image annotation tool supporting both PascalVOC and Yolo format. Figure 1: Examples of collected images Labeling dataĪfter gathering enough images, it's time to label them so your model knows what to learn.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed